Stokoe

Accessibility architecture for real-time systems

Captions, transcripts, and assistive signals as append-only events—transported independently of speech models, UI frameworks, or vendor APIs.

Inspectable. Auditable. Built for compliance.

Accessibility is not a feature. It's the transport layer.

Most systems bolt accessibility on at the edges—after models, after UI, after decisions are made. The result: captions that lag, transcripts that vanish, audit trails that don't exist.

Stokoe inverts that model. Accessibility signals are generated, transported, and preserved at the system level—decoupled from speech recognition lifecycles and rendering pipelines.

Architecture Principles

Transport-Layer Accessibility

Captions and transcripts are first-class events in the transport layer, not UI artifacts derived after the fact.

Append-Only Events

Every utterance emits an immutable event with a stable ID and dual timestamps. No overwrites. No deletions.

Interim + Final Preserved

Partial, interim, and final text are all captured. Latency is observable and measurable per-event.

Privacy-Preserving

No persistent voiceprints. Ephemeral speaker markers are optional. Cryptographic hygiene throughout.

Inspectable & Auditable

Every event can be replayed, inspected, and verified. Built for compliance reviews and dispute resolution.

Vendor-Neutral

Speech, vision, and language models are swappable. Stokoe is the spine—not the brain.

Local-First

Process at the edge when possible. Lower latency, less data exposure, better resilience.

Security as Baseline

Security and accessibility are co-requirements, not tradeoffs. Both are addressed at the architecture level.

What Stokoe Produces

Structured, append-only events—not fragile UI text. Each event carries a stable ID, dual timestamps (audio time and emit time), and an interim/final flag.

caption.event {

id: 1842,

audio_timestamp_ms: 65321,

emit_timestamp_ms: 65398,

text: "We should start the meeting now.",

is_final: false

}One event stream powers multiple outputs:

- Live captions

- Post-session transcripts

- Compliance audit logs

- Latency diagnostics

Same source of truth. No reconciliation required.

Accessibility Without Guesswork

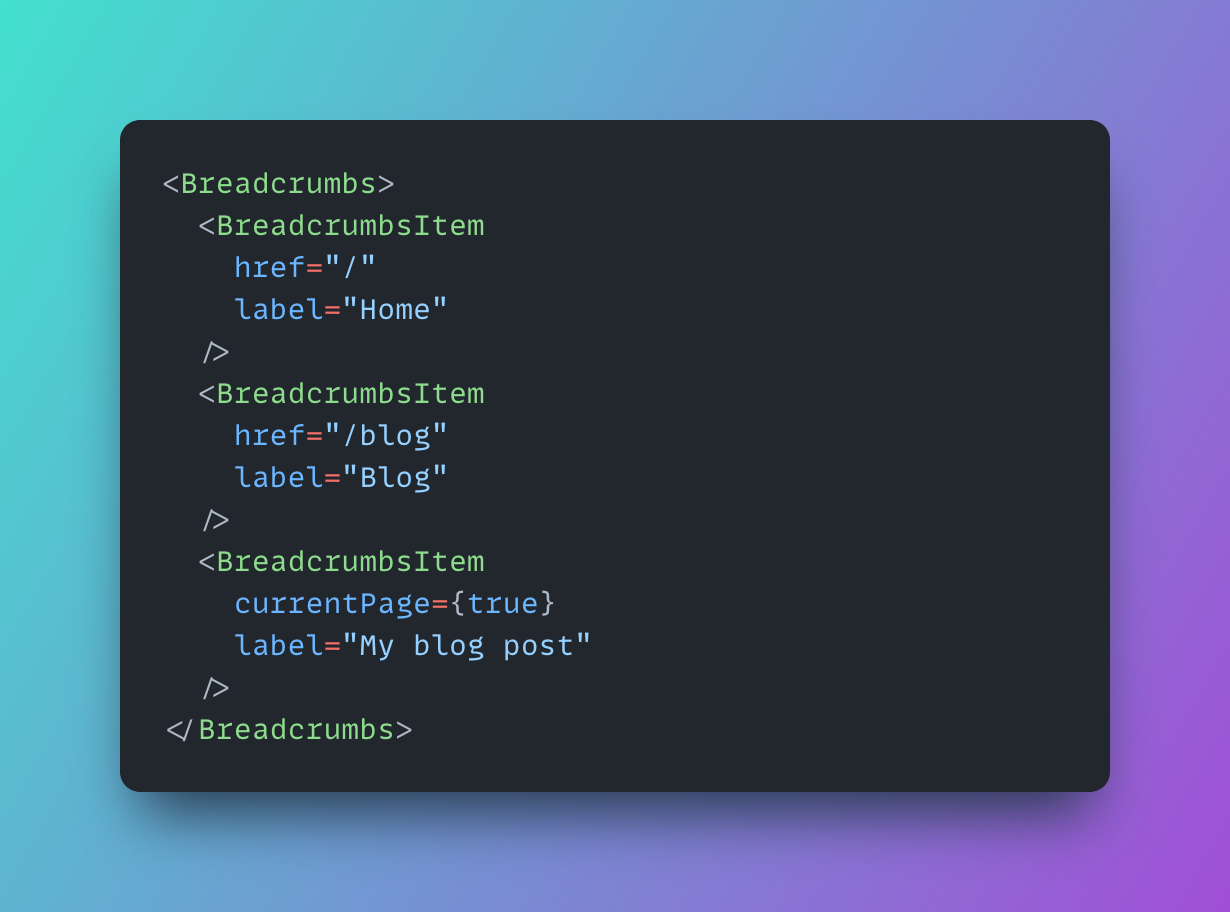

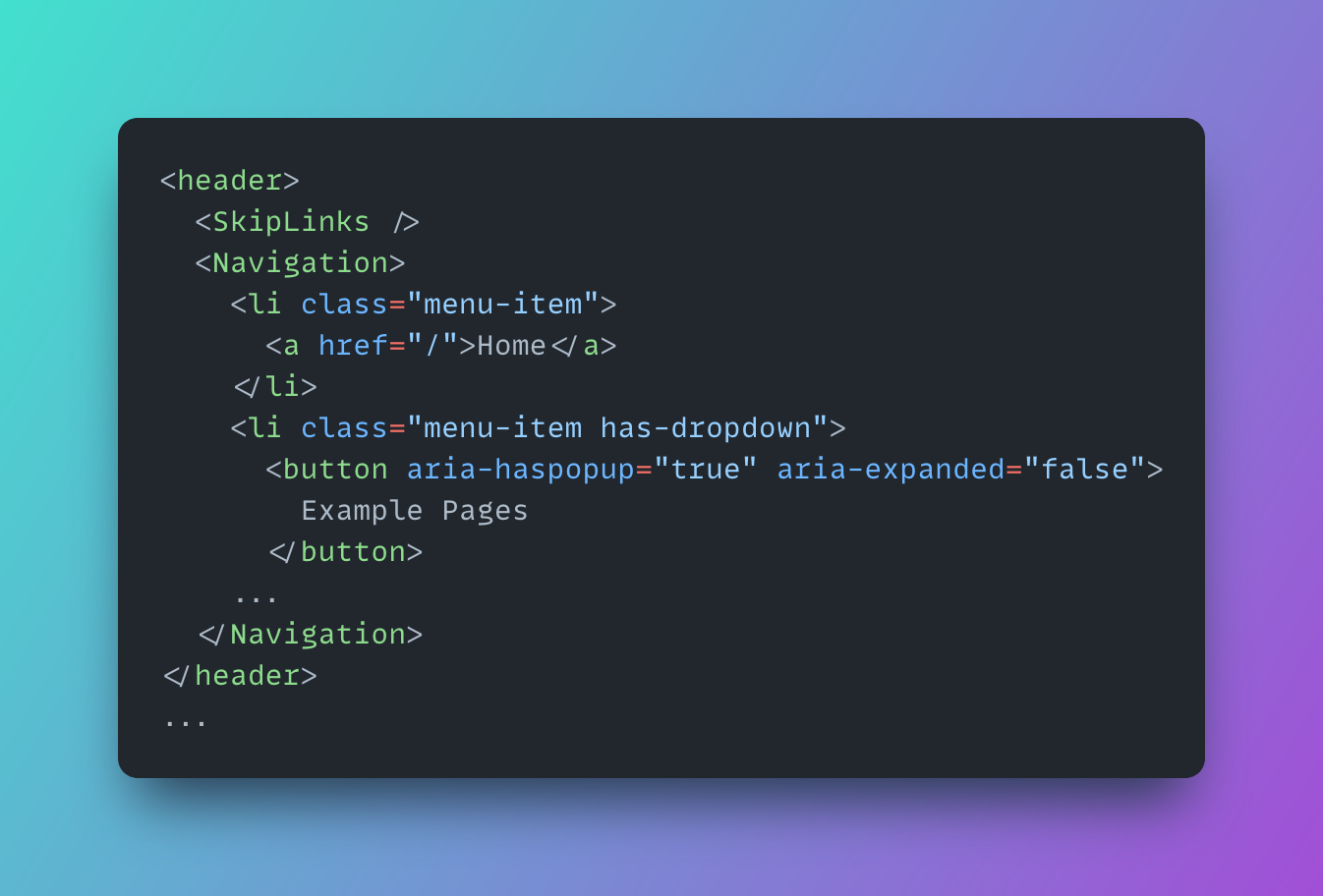

Reference implementations show how the architecture behaves in real systems—not how UI should look.

The architecture is the product. Components are proofs of correctness.

Designed for Real Standards

Built to support compliance, not just user experience:

- WCAG 2.2 AA caption and transcript requirements

- FCC Part 14 accessibility obligations

- Real-time communication latency constraints

- Audit trail and dispute resolution needs

Compliance is achievable when the system is correct by construction.

Read the Specifications

Stokoe is infrastructure. The specifications define how it works.

Technical documentation for event-driven caption transport, world-model alignment, and accessibility architecture.

View DocumentationWhat is Stokoe?

An accessibility architecture for real-time communication systems. It defines how captions and transcripts move through systems as first-class events—not how they're rendered or what speech model generates them.

Is Stokoe an AI model?

No. Stokoe transports and preserves accessibility signals. Speech and language models are swappable upstream components. Stokoe is the spine, not the brain.

Who is Stokoe for?

Teams building calling platforms, meeting systems, or any real-time media service where captions, transcripts, or accessibility audit trails matter.

How does this relate to WCAG?

WCAG defines user-visible outcomes. Stokoe addresses the system-level causes that prevent teams from meeting them—append-only events, stable IDs, and measurable latency make compliance achievable by construction.

Is Stokoe production-ready?

Yes. The architecture handles the latency, scale, and auditability requirements of production communication platforms—not hypothetical scenarios.

System Guarantees

WCAG

2.2 AA

Supported

Stable

IDs

Per Event

Dual

Timestamps

Audio + Emit

Append

Only

Events